AS FEATURED AT SAS INNOVATE 2026 · HACKATHON SUPERDEMO

For more than twenty years, anti-money laundering has been the same puzzle. Billions of transactions move through financial systems every day, and only a tiny fraction of them are tied to actual financial crime. Finding those cases is a needle-in-a-haystack problem, and missing even one is out of the question.

Rule-based systems narrowed the search.

Machine learning shrank the haystack.

Neither changed the underlying game. Agentic AI does.

Discover the capabilities and real business benefits in detail.

Most compliance teams know AI is coming. Few know how ready they actually are. Answer a few questions and we'll send you your readiness score, how you compare to peers in financial crime, and where Aurora can help you move forward.

Aurora is a modular, agent-based suite built by Consortix on SAS Viya. It extends existing AML systems rather than replacing them, and it tackles the slowest, most manual parts of the scenario lifecycle while keeping every step transparent, documented, and auditable.

Read more about Aurora on our blog.

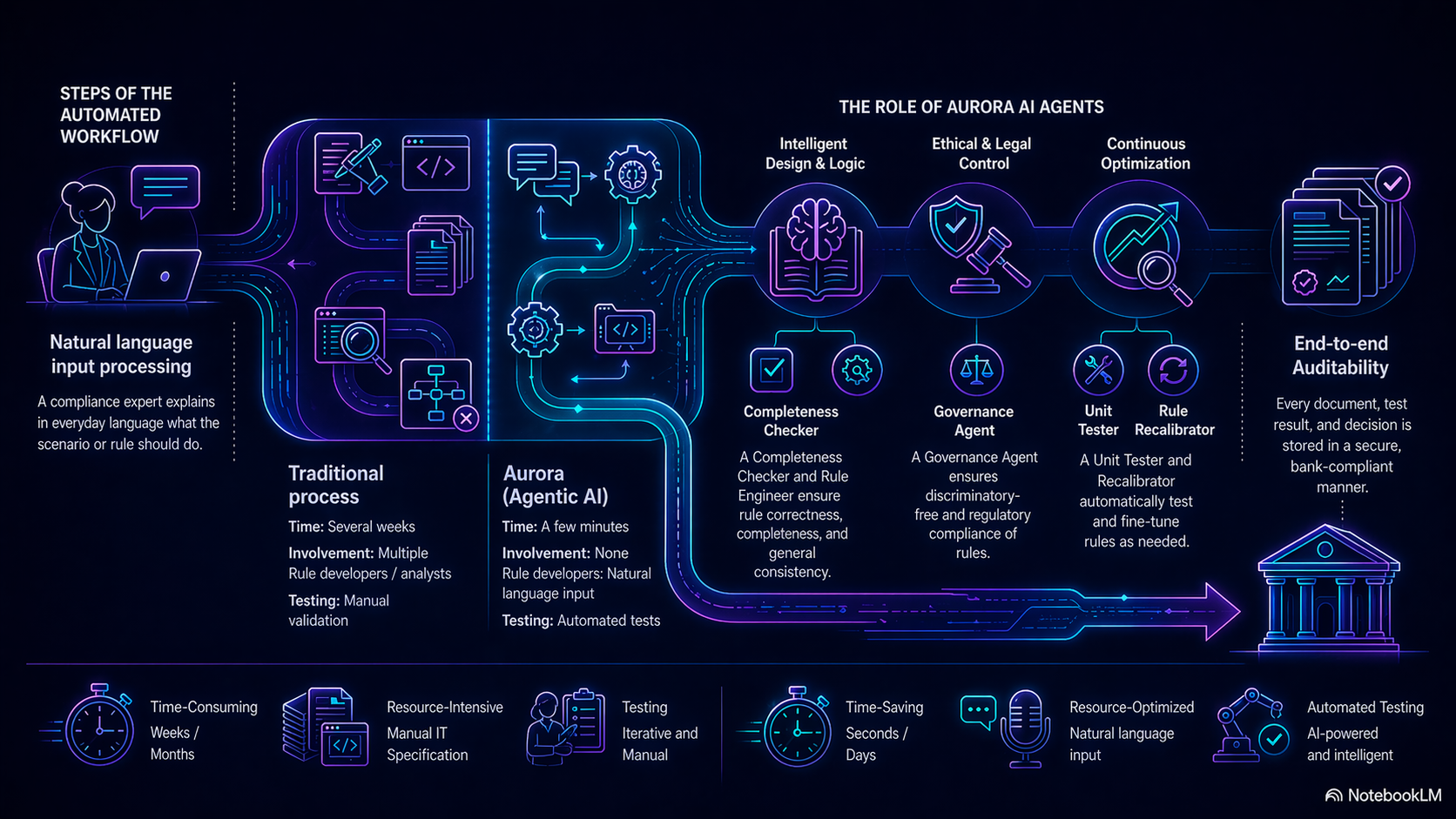

Scenario development traditionally runs through business requirements, IT specification, implementation, testing, tuning, approval, and go-live. Weeks of calendar time, many handovers, and plenty of room for things to get lost between business and IT.

With Aurora, those same steps collapse into one directed workflow. The expert writes the requirement in natural language. The agents take over from there, turning it into a formal rule, testing it, validating compliance, and loading the result into production. What used to take weeks now takes days, and the scenario that comes out is documented, traceable, and always editable by the team responsible for it, so final control stays with the people who own the risk.

The pipeline is modular: each agent performs one auditable task and hands a structured artifact to the next.

Translates the confirmed description into formal,system-readable rule logic. The output is technically implementable, fully documented, and structuredfor audit.

Reads the natural-language description and verifies that all the information required to build the rule is present. If something is missing or ambiguous,it asks targeted questions and records the clarified input.

Checks whether the new rule overlaps with existing scenarios and verifies that the rule contains no discriminatory or legally impermissible filtering conditions. Regulatory and ethical compliance is enforced at the point of creation.

Generates test cases automatically, runs them against the proposed rule, and produces a pass/fail report. Where test results suggest refinement, it feeds observations back into the logic.

Updates the AI models already in production with fresh data produced by the new rule, keeping the broader detection system aligned with the latest logic.

At the 2025 SAS Hackathon, a global competition with over 2,000 participants from 66 countries, the Consortix-AURORA team won three categories: Banking, Agentic AI/Decisioning, and Trustworthy AI. The Banking win recognized Aurora's approach to AML scenario development. The other two awards reflected that automation speed and regulatory governance can coexist in the same solution. The overall champion will be announced at SAS Innovate 2026 in April, where Consortix is among the contenders.